No one can argue that the hype engine of AI is in top gear, as it paints a picture of a future that you either buy in to, or are scared shitless about. Whole industries are being upended, as we imagine the workflows and roles of every employee. However – what happens when the lights go dark?

Let’s look at this another way.

- How much productivity do you lose when the Internet goes out?

- How much work gets done when Slack/Teams go down?

- How much communication happens when GSuite/O365 is offline?

We have all experienced these situations, some welcome the forced downtime, when the underlying infrastructure that you have predicated your business on goes dark, there literally is nothing you can do but wait. With most of our software being delivered via the browser – the internet going dark is a big ass hit to an organizations productivity. To mitigate such problems, we look to dual internet suppliers coming into offices.

The higher up the stack you go, it gets harder/impossible to have a backup. If Microsoft has a problem, and O365 is offline, then I can’t really switch over to GSuite to receive my email. Not completely impossible as we could start going back old-skool and host our own email server. Madness? Is it though?

It’s not just availability that is drawing us back to owning over renting but cost is now factoring in too. Running any of the SaaS products get real expensive at even the lower end of the scale (Looking at you Atlassian – Jira gets very expensive very quickly – for a ticket system?!). This doesn’t include the cost of a SaaS going dark, and the loss of your productivity – no SLA is going to make up for that.

AI is a whole new layer of dependency. So what happens when the public LLM’s go offline?

Surely not Alan – I keep reading about the billions of investments from OpenAI, Google, etc.

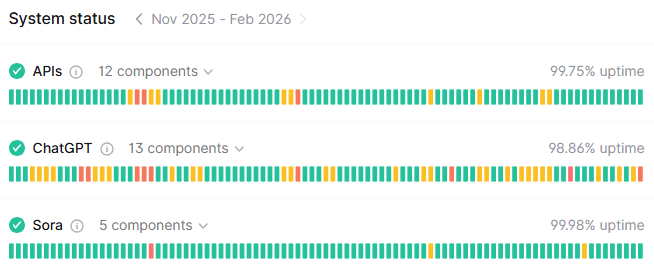

Here is the dirty little secret they don’t want you to know – they go down. A lot. Any one that has built any sort of scale around utilizing public APIs will tell you, they have had to spend effort to work around when goes offline. We have at HiBid yet we still frequently get these types of messages in our logs (from all providers):

Gemini API Error: 503 - {

"error": {

"code": 503,

"message": "This model is currently experiencing high demand. Spikes in demand are usually temporary. Please try again later.",

"status": "UNAVAILABLE"

}}

Fortunately we recover well as our systems automatically roll over to alternatives, all seamless to the customer. This is not the future we were promised though. Couple this with the huge cost, we need to seek alternatives.

We are being driven back to hosting our own models, on servers we control, so we can guarantee a level of service. Hosting open-source models is proving to be extremely powerful; and no matter what anyone tells you, open-source is more than “good enough”, as not every AI question demands the latest and greatest LLM to answer.

There has been another big benefit in this move back to self-hosting; cost. It is drastically cheaper particularly at volume. If you are the sort of organization that prompts once or twice an hour, then yeah, the public LLMs will always be more cost-effective. If on the other hand, you are processing millions of prompts a day, then the public LLMs are not your friend.

In the recent PE Funcast episode, this was a prediction they also called out (including a viewpoint Scott Galloway has also noted that China could easily saturate the AI industry with cheap/free open source models).

While I am illustrating this through our usage of AI in production systems, the same can be said of organizations that have armed their employees with the AI tools to make them productive. How many of your agentic systems have you built on top of OpenAI (ChatGPT) or Anthropic (Claude)?

How reliant are you on the public models being up? If you are, then you need to treat them with the same respect as that Internet line coming into the building. What is your backup?

I am bullish about the future of AI. That said I believe OpenAI is the “Netscape” of the AI revolution. They came at a time to show us what was possible, then failed to deliver and people where forced to look elsewhere (not dissimilar to what Tesla did for electric cars – they ignited an industry and now have fallen far behind – I say that as a former early Model-S owner). We are in this phase now.

If you haven’t started looking at hosting your own models – then start NOW. You can start immediately by popping over to https://ollama.com/ and install one of the open source models on your local machine – disconnect from the Internet and you will still be prompting away. Most local users won’t even notice the speed difference.

Enterprise, you have options too. AWS Bedrock is a good start, if you don’t want the hassle of maintaining servers, but once you let that requirement go, you can jump into a whole world of models that more than likely will power whatever part of your eco-system, completely under your control on your own servers.

There is a trend pushing people back to physical media as streaming services become more expensive, we want to own our digital content. AI is driving us back to maintaining our own systems and I for one think this is a good thing.

As a CTO, I can at least have things at my disposal to keep the AI agents up (as my CEO glares at me). If all you can do is to refresh the Status Page, then you are not in control.

The public LLMs however do not share this view.

AI Disclaimer: Gemini Nano Banana Pro was used to generate the photo – from the ’83 movie Wargames.